SCD Type 2 Implementation using Informatica PowerCenter

Slowly Changing Dimension Type 2 also known SCD Type 2 is one of the most commonly used type of Dimension table in a Data Warehouse. SCD Type 2 dimension loads are considered to be complex mainly because of the data volume we process and because of the number of transformation we are using in the mapping. Here in this article, we will be building an Informatica PowerCenter mapping to load SCD Type 2 Dimension.

Understand the Data Warehouse Architecture

Before we go to the mapping design, Lets understand the high level architecture of our Data Warehouse.

Slowly Changing Dimension Series

Part I : SCD Type 1.

Part II : SCD Type 2.

Part III : SCD Type 3.

Part IV : SCD Type 4.

Part V : SCD Type 6.

Part I : SCD Type 1.

Part II : SCD Type 2.

Part III : SCD Type 3.

Part IV : SCD Type 4.

Part V : SCD Type 6.

Here we have a staging schema, which is loaded from different data sources after the required data cleansing. Warehouse Tables are loaded from the staging schema directly. Both staging tables and the warehouse tables are in two different schemas with in a single database instance.

Understand the Staging and Dimension Table.

Staging Table

In our staging table, we have all the columns required for the dimension table attributes. So no other tables other than Dimension table will be involved in the mapping. Below is the structure of our staging table.

- CUST_ID

- CUST_NAME

- ADDRESS1

- ADDRESS2

- CITY

- STATE

- ZIP

Key Points :

- Staging table will have only one days data.

- Data is uniquely identified using CUST_ID.

- All attribute required by Dimension Table is available in the staging table.

Dimension Table

Here is the structure of our Dimension table.

- CUST_KEY

- AS_OF_START_DT

- AS_OF_END_DT

- CUST_ID

- CUST_NAME

- ADDRESS1

- ADDRESS2

- CITY

- STATE

- ZIP

- CHK_SUM_NB

- CREATE_DT

- UPDATE_DT

Key Points :

- CUST_KEY is the surrogate key.

- CUST_ID, AS_OF_END_DT is the Natural key, hence the unique record identifier.

- Record versions are kept based on Time Range using AS_OF_START_DT, AS_OF_END_DATE

- Active record will have an AS_OF_END_DATE value 12-31-4000

- Checksum value of all dimension attribute columns are stored into the column CHK_SUM_NB

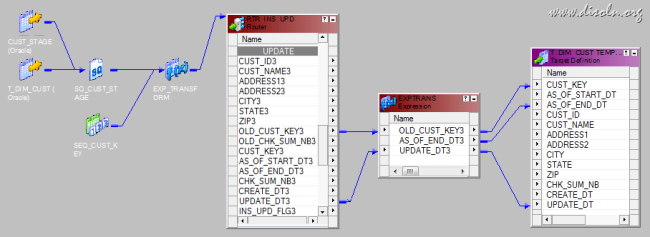

Mapping Building and Configuration

Now we understand the ETL Architecture, Staging Table, Dimension Table and the design considerations, we can go to the mapping development. We are splitting the mapping development into six steps.

- Join Staging Table and Dimension Table

- Data Transformation

- Generate Surrogate Key

- Generate Checksum Number

- Other Calculations

- Identify Insert/Update

- Insert the new Records

- Update(Expire) the Old Version

- Insert the new Version of Updated Record

1. Join Staging Table and Dimension Table

We are going to OUTER JOIN both the Staging (Source) Table and the Dimension (Target) Table using the SQL Override below. An OUTER Join gives you all the records from the Staging table and the corresponding records from Dimension table. if it is there is no corresponding record in the Dimension table, it returns NULL values for the Dimension table columns.

SELECT--Columns From Staging (Source) Tables CUST_STAGE.CUST_ID,CUST_STAGE.CUST_NAME,CUST_STAGE.ADDRESS1,CUST_STAGE.ADDRESS2,CUST_STAGE.CITY,CUST_STAGE.STATE,CUST_STAGE.ZIP,--Columns from Dimension (Target) Tables.T_DIM_CUST.CUST_KEY,T_DIM_CUST.CHK_SUM_NBFROM CUST_STAGE LEFT OUTER JOIN T_DIM_CUSTON CUST_STAGE.CUST_ID = T_DIM_CUST.CUST_ID -- Join On the Natural KeyAND T_DIM_CUST.AS_OF_END_DT = TO_DATE('12-31-4000','MM-DD-YYYY') – Get the active record.

2. Data Transformation

Now map the columns from the Source Qualifier to an Expression Transformation. When you map the columns to the Expression Transformation, rename the ports from Dimension Table with OLD_CUST_KEY, CUST_CHK_SUM_NB and add below expressions.

- Generate Surrogate Key : A surrogate key will be generated for each and every record inserted in to the Dimension table

- CUST_KEY : Is the surrogate key, This will be generated using a Sequence Generator Transformation

- Generate Checksum Number : Checksum number of all dimension attributes. Difference in the Checksum value between the incoming and Checksum of the Dimension table record will indicate a changed column value. This is an easy way to identify changes in the columns than comparing each and every column.

- CHK_SUM_NB : MD5(TO_CHAR(CUST_ID) || CUST_NAME || ADDRESS1 || ADDRESS2 || CITY || STATE || TO_CHAR(ZIP))

- Other Calculations :

- Effective Start Date : Effective start date of the Record

- AS_OF_START_DT : TRUNC(SYSDATE)

- Effective end date : Effective end date of the Record,

- AS_OF_END_DT : TO_DATE('12-31-4000','MM-DD-YYYY')

- Record creation date : Record creation timestamp, this will be used for the records inserted

- CREATE_DT : TRUNC(SYSDATE)

- Record updating date : Record updating timestamp, this will be used for records updated.

- UPDATE_DT : TRUNC(SYSDATE)

3. Identify Insert/Update

In this step we will identify the records for INSERT and UPDATE.

- INSERT : A record will be set for INSERT if the record is not exist in the Dimension Table, We can identify the New records if OLD_CUST_KEY is NULL, which is the column from the Dimension table

- UPDATE : A record will be set for UPDATE, if the record is already existing in the Dimension table and any of the incoming column from staging table has a new value. If the column OLD_CUST_KEY is not null and the Checksum of the incoming record is different from the Checksum of the existing record (OLD_CHK_SUM_NB <> CHK_SUM_NB), the record will be set for UPADTE

- Following expression will be used in the Expression Transformation port INS_UPD_FLG shown in the previous step

- INS_UPD_FLG : IIF(ISNULL(OLD_CUST_KEY), 'I', IIF(NOT ISNULL(OLD_CUST_KEY) AND OLD_CHK_SUM_NB <> CHK_SUM_NB, 'U'))

Now map all the columns from the Expression Transformation to a Router and add two groups as below

- INSERT : IIF(INS_UPD_FLG = 'I', TRUE, FALSE)

- UPDATE : IIF(INS_UPD_FLG = 'U', TRUE, FALSE)

4. Insert The new Records

Now map all the columns from the ‘INSERT’ group to the Dimension table instance T_DIM_CUST. While mapping the columns, we don’t need any column named OLD_, which is pulled from the Dimension table.

5. Update(Expire) the Old Version

The records which are identified for UPDATE will be inserted into a temporary table T_DIM_CUST_TEMP. These records will then be updated into T_DIM_CUST as a post session SQL. You can learn more about this performance improvement technique from one of our previous post.

We will be mapping below columns from ‘UPDATE’ group of the Router Transformation to the target table. To update(expire) the old record we just need the columns below list.

- OLD_CUST_KEY : To uniquely identify the Dimension Column.

- UPDATE_DATE : Audit column to know the record update date.

- AS_OF_END_DT : Record will be expired with previous days date.

While we map the columns, AS_OF_END_DT will be calculated as ADD_TO_DATE(TRUNC(SYSDATE),'DD',-1) in an Expression Transformation. Below image gives the picture of the mapping.

6. Insert the new Version of Updated Record

The records which are identified as UPDATE will have to have a new(active) version inserted. Map all the ports from the ‘UPDATE’ group of the Router Transformation to target instance T_DIM_CUST. While mapping the columns, we don’t need any column named OLD_, which is pulled from the Dimension table.

Workflow and Session Creation

During the session configuration process, add the below SQL as part of the Post session SQL statement as shown below. This correlated update SQL will update the records in T_DIM_CUST table with the values from T_DIM_CUST_TEMP. Like we mentioned previously, this is a performance improvement technique used to update huge tables.

UPDATE T_DIM_CUST SET

(T_DIM_CUST.AS_OF_END_DT,

T_DIM_CUST.UPDATE_DT) =

(SELECT

T_DIM_CUST_TEMP.AS_OF_END_DT,

T_DIM_CUST_TEMP.UPDATE_DT

FROM T_DIM_CUST_TEMP

WHERE T_DIM_CUST_TEMP.CUST_KEY = T_DIM_CUST.CUST_KEY) WHERE EXISTS

(SELECT 1

FROM T_DIM_CUST_TEMP

WHERE T_DIM_CUST_TEMP.CUST_KEY = T_DIM_CUST.CUST_KEY)

Now lets look at the data see how it looks from the below image.

Hope you guys enjoyed this. Please leave us a comment in case you have any questions of difficulties implementing this.